Challenges

- Challenge 1: Here

- Challenge 2: This article

- Challenge 3: Here

- Challenge 4: Here

- Challenge 5: Here

These challenges are a fantastic hackathon approach to learning KQL, every week poses a new and unique approach to different KQL commands and as the weeks progress, I’ve learned some interesting tricks. Let’s take a look at challenge 2.

General advice

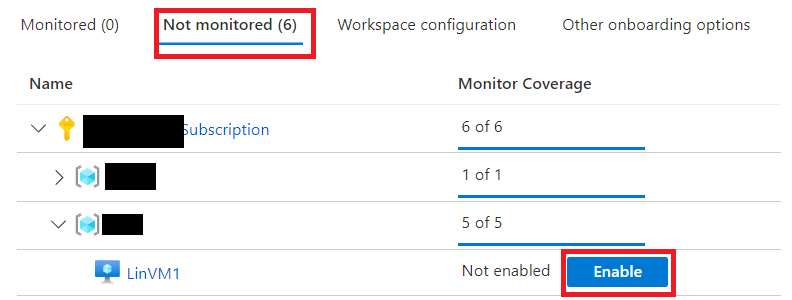

I’ve mentioned previously that there are hints that can be accessed from the detective UI, from this challenge onwards the hints provide critical information and without them there are assumptions you need to make, which if incorrect will throw you off the correct solution.

This is also the first challenge that has multiple mays to get to the answer, in this post i will be discussing the more interesting one.

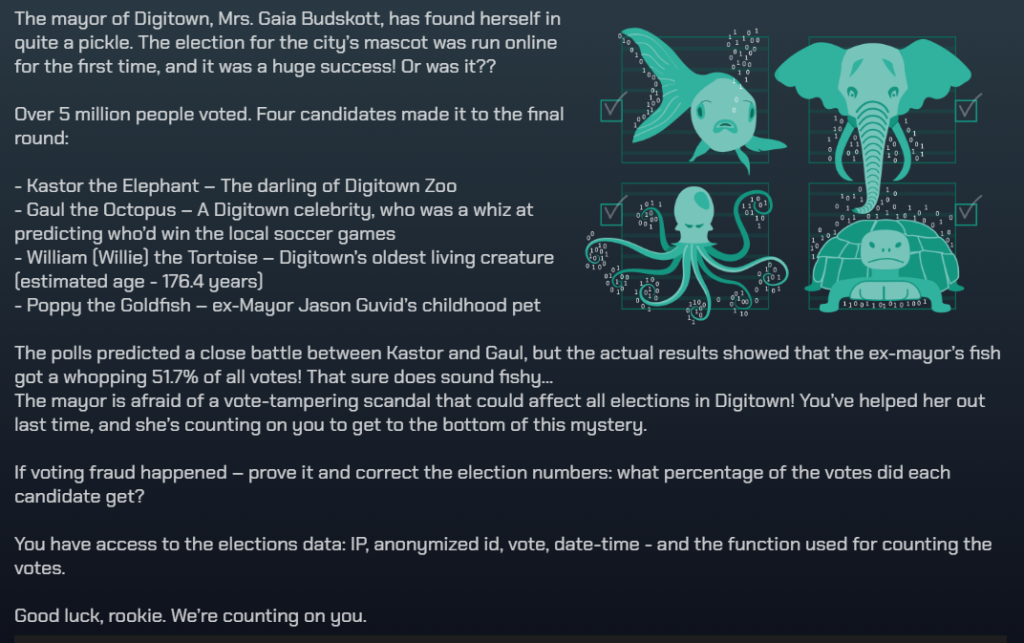

Challenge 2: Election fraud?

The second challenge ramps up the difficulty, you’ve been asked to verify the results of the recent election for the town mascot.

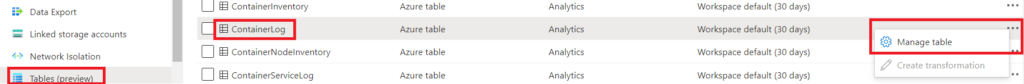

KQL commands that will be helpful are anomaly detection, particularly series_decompose_anomalies and bin, alternatively you can also make use of format_datetime and a little bit of guesswork

//This query will analyze the votes for the problem candidate and look for anomalies, if any are found they will be removed from the final count give the correct results for the election!

let compromisedProxies = Votes

| where vote == “Poppy”

| summarize Count = count() by bin(Timestamp, 1h), via_ip

| summarize votesPoppy = make_list(Count), Timestamp = make_list(Timestamp) by via_ip

| extend outliers = series_decompose_anomalies(votesPoppy)

| mv-expand Timestamp, votesPoppy, outliers

| where outliers == 1

| distinct via_ip;

Votes

| where not(via_ip in (compromisedProxies) and vote == “Poppy”)

| summarize Count=count() by vote

| as hint.materialized=true T

| extend Total = toscalar(T | summarize sum(Count))

| project vote, Percentage = round(Count*100.0 / Total, 1), Count

| order by Count

Digitown can sleep easy knowing that they have their correct town mascot due to your efforts! Stay tuned for some excitement in challenge 3.

![]()